A Study on the Applicability of Generative Artificial Intelligence in the Automotive Design Process

Copyright Ⓒ 2025 KSAE / 239-07

This is an Open-Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License(http://creativecommons.org/licenses/by-nc/3.0) which permits unrestricted non-commercial use, distribution, and reproduction in any medium provided the original work is properly cited.

Abstract

Since ChatGPT's introduction in November 2022, generative AI tools, such as DALL-E and Midjourney, have significantly impacted automotive design. DALL-E uses Convolutional Neural Networks(CNNs) to align image details with textual prompts, while Midjourney employs Generative Adversarial Networks(GANs) to generate realistic images through competing neural networks. This study employed both tools in automotive design via qualitative and quantitative research methods using generated images, extracted prompts, and adjective keywords. This study showed that DALL-E produced results closer to user intentions compared to Midjourney, supported by user surveys. The findings suggest that further research should explore language variations, different vehicle types, user expertise, and professional designer insights to expand generative AI's application in design.

Keywords:

Automotive design, Generative AI, Adjective keyword, Design process, Jaccard index1. Introduction

Since ChatGPT launched in November 2022, numerous generative AI design tools have been released and used. Jordan Taylor, founder of Vizcom, an emerging generative AI tool in transportation design, said in an interview with Car Design News at the 2024 Geneva Motor Show,1) “Many people are concerned that AI advancements will take their jobs, but we are confident that AI will contribute to the acceleration, not the automation, of design workflows.” Vizcom's client list includes many manufacturing companies, including Dell, Newbalance, Ford, Mercedes-Benz, and Volvo, and the company is even expanding its influence in the industry. Adobe has also integrated generative AI into its design software, with the latest version of Illustrator now available in beta. However, with so many AI tools being released and utilized by so many designers, the question that this research begins with is: Are these tools truly functioning as intended by the designers?

2. Theoretical Considerations

Before evaluating the usability of generative AI, we examine the technical characteristics of the currently used AI design tools. That is, this study compares two of the most popular programs, DALL-E and Midjourney, to evaluate the usability of AI in transportation design.

2.1 Reasons for Choosing DALL-E and Midjourney

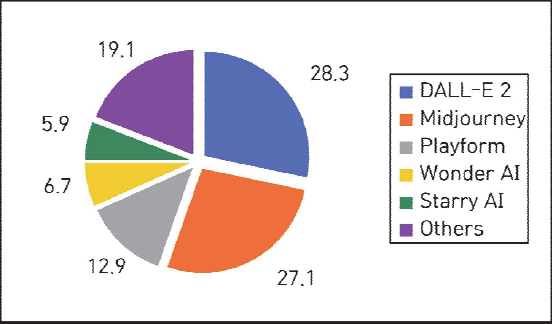

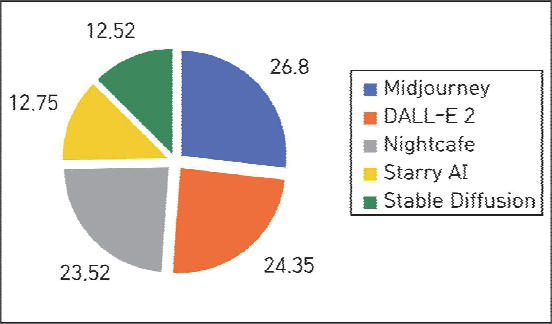

DALL-E and Midjourney were selected among the various AI design tools available because they are the most widely used applications.2) In fact, based on a recent survey conducted by Platform.io, 77.2 % of 500 artists with experience using text-to-image generative AI reported that these AI tools were useful in their work. Furthermore, in Platform.io’s AI preference survey, DALL-E and Midjourney ranked first and second, respectively (Fig. 1). According to research by Statista, the two tools have a combined market share of 51.15 % (Fig. 2), making them the most influential tools in the market.

2.2 Utilizing AI in the Design Process

Bang and Kim3) explored how to utilize AI at all product design process stages. Specifically, they argued that utilizing generative AI during the ideation and development stages can complement designers’ creativity while saving time and resources in the product design process. Jeong and Choi4) claimed that AI tools can be primarily utilized in tasks such as idea generation, design refinement, and prototype creation. However, they suggested that designers’ requirements for support tools will still vary depending on the design field, design output type, and company structure.

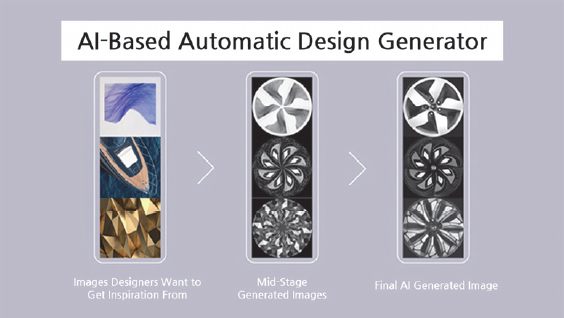

Hyundai Motor Group and Volkswagen Group are examples of actual corporate use of generative AI in the transportation design field. Both companies are customizing and using the program. Hyundai AIRS Company,5) a subsidiary of Hyundai Motor Group,5) developed dedicated software and implemented it in its wheel design process.

Audi, a brand under the Volkswagen Group, has applied a design tool called Felgan6) to its wheel design process. Wheel design requires fewer considerations than the overall exterior design of a vehicle. This seems to make it a suitable candidate for piloting generative AI tools capable of generating numerous design options in a short time.

Currently, AI tools are mainly used for 2D designs, such as frontal images of wheels. However, with rapidly advancing AI technology, it is expected that soon, full 3D images of vehicles will be generated without distortion.

2.3 Analysis of Prior Research

We examined past research cases that utilized AI-based tools in the design process in the design industry. This study primarily examined papers published after 2022, when DALL-E and Midjourney launched their services, as synthesized in Table 1.

Chen et al.7) noted that automotive designers face significant challenges in verbalizing their ideas while using AI tools. Their experiments demonstrated that mobility designers’ work integrates both verbal and nonverbal expressions, making it difficult to fully reflect their opinions when inputting prompts to the AI. Therefore, they stressed the necessity of a precise understanding of how the AI responds to specific keywords to accurately use the tool.

Hwang8) designed a poster with a unified theme using various AI tools. While recognizing the usefulness of AI as a tool, the author emphasized the importance of designers setting specific prompts as supervisors. The researcher also pointed out the limitation that the study relied solely on qualitative analysis methods and thus could not be extended to quantitative findings.

Na9) studied the use of Midjourney in the idea generation phase of product design. The author assessed its effectiveness by generating results from various prompts and consulting designers regarding the results. The researcher also emphasized the need to integrate the designer's experience and sensibility to overcome limitations in specific details, although it would be helpful for most initial design drafts and idea generation.

Cheng and Kim10) explored the potential for creating new value using generative AI tools in spatial design. Adopting the Double Diamond model, they proposed a method for utilizing generative Stable Diffusion in each phase to perform the process more efficiently. The results confirmed that AI tools are indeed supporting designers in developing more creative and innovative designs.

On the other hand, Lee and Ko11) noted the paucity of research on AI image generators in architecture, so they explored their use in the architectural design process. They particularly experimented to determine whether Stable Diffusion could generate architectural concepts and three-dimensional architectural images. Although improvements were necessary to construct specific three-dimensional forms, they observed that the issues were likely to be resolved in the near future. Consequently, they anticipated that while increased productivity among architects would enhance the quality of architectural spaces, core benefits could be concentrated in the hands of a select few.

Although studies exploring AI applications in the transportation sector remain underexplored, the aforementioned relevant studies emphasize the potential of AI, as they examined cases in which AI design tools were utilized in various design fields to improve design work efficiency. However, the part where the utility was demonstrated was still limited to the early stages of the overall design process. Additionally, even though it provides invaluable insights on using AI tools, designers must have a more thorough understanding of the correct procedures to ensure that the AI functions properly.

3. Experiment

Chapter 3 details the analysis of the specific transportation design. Previous studies have primarily focused on qualitative approaches evaluating the image output of a single generative AI tool through interviews with designers. Notably, this study is different in that it quantitatively compares the results of two generative AIs. Since prompt-based generative AI visualizes user intent, a key performance indicator is how accurately it reflects the user's intent. In actual design processes, users communicate their ideas verbally (via prompts), and a key performance indicator is how accurately it reflects the user's intent. Therefore, by analyzing the correlation between the initial input prompt and the prompt converted from the image, we can assess how faithfully the tool fulfills the user's original intent.

3.1 Experimental Design

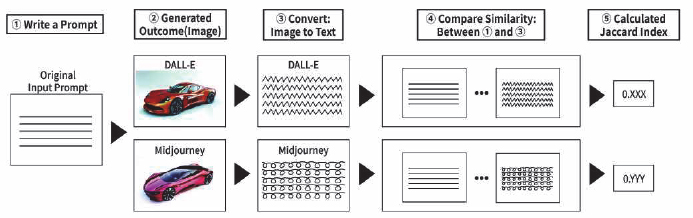

The experiment was performed in four stages. In the first stage, the same prompt was input into DALL-E and Midjourney to generate images. In the second stage, the generated images were input into each tool to extract prompts. In the third stage, emotional vocabulary related to car images was collected based on a literature review, and similar emotional vocabulary was classified into several groups. In the fourth stage, the correlation between the extracted prompts and the initially input prompts was analyzed. The similarity between the two prompts was measured based on the emotional vocabulary grouped in the previous stage, and the analysis was conducted using the Jaccard index. The overall experimental flow is illustrated in Fig. 5.

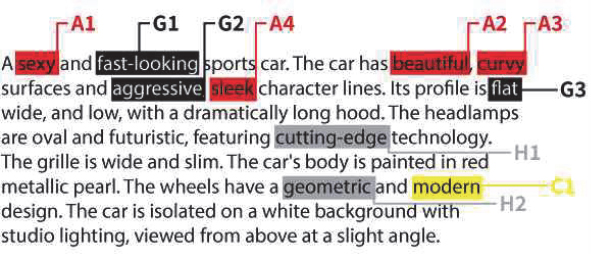

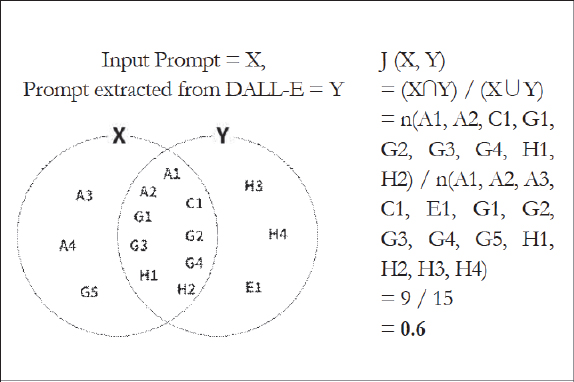

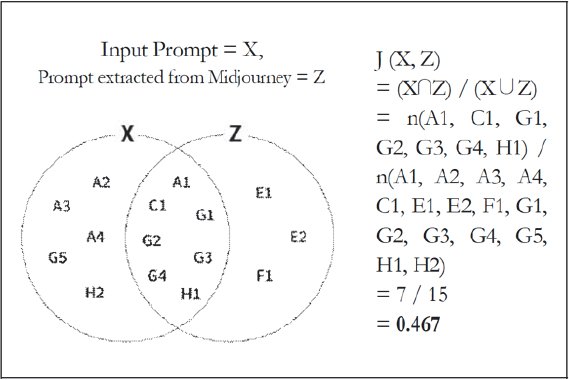

The Jaccard index is a value that measures the similarity between two sets. When comparing two texts or numeric data, it expresses the degree of similarity in numbers. The formula for the Jaccard index is shown in Fig. 6. In case the original input prompt is set as A, and the image generated by the AI tool is converted back into text as set B, the Jaccard index of A and B will be calculated to measure the similarity between them. The Jaccard index provides a clear numerical value of the text similarity between two prompts, serving as a crucial indicator for relatively comparing the operational performance of the tool. It was assumed that the tool with a higher index can be used as a basis for identifying which tool better reflects the user's intent.

While DALL-E does not allow variable manipulation when inputting prompts, Midjourney can artificially distort images using three parameters: stylization, weirdness, and variety. In the experiment, all three conditions were set to 0 to control the variables.

3.2 Overview and Collection of Emotional Vocabulary

Prior to analyzing the correlations between prompts, it is necessary to adjust the method for calculating the Jaccard index. Treating each word in the prompt as an element would lead to an excessively large number of denominators, yielding an exponent very close to zero. Therefore, articles in English sentences were excluded, and unnecessary nouns were not considered elements.

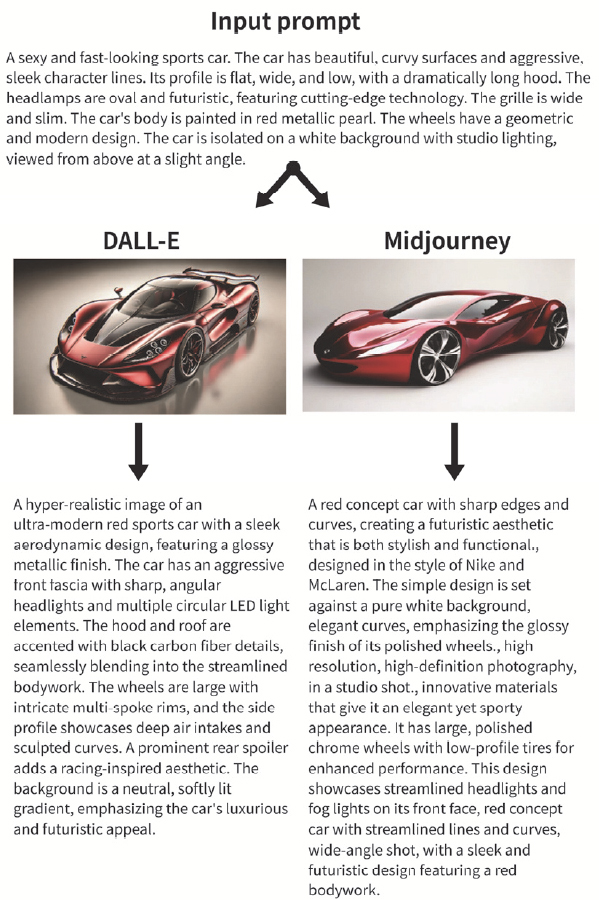

Emotional vocabulary, a key technique used to study customer emotions, is commonly used to translate consumers’ emotional needs into product design elements. This study selected vocabulary appropriate for evaluating automotive design through collecting and refining relevant literature. First, 380 words were selected from papers examining emotional vocabulary in product design by Helander et al.,12) Chae et al.,13) Lim and Na.14) From these, duplicate expressions were removed to condense the list to 149 words. Second, 104 words suitable for describing transportation vehicle design were selected. Third, the 104 words were divided into eight groups according to their visual formative qualities. The classification was based on the survey results obtained by Helander et al.12)

Group A was characterized by a feminine feel, Group B by a compact feel, Group C by a trendy feel, Group D by a classic feel, Group E by a luxurious feel, Group F by a masculine feel, Group G by a sporty feel, and Group H by a mechanical feel. The classifications are labelled in Table 2.

4. Experimental Results and Analysis

This section explains how we generated and compared results using two generative AI methods. In addition to the experiments, we evaluated stakeholder interviews qualitatively and quantitatively. Particularly, we first converted the AI-generated images back into text. Then, compared the input and extraction prompts numerically.

4.1 Image and Prompt Generation Results of DALL-E and Midjourney

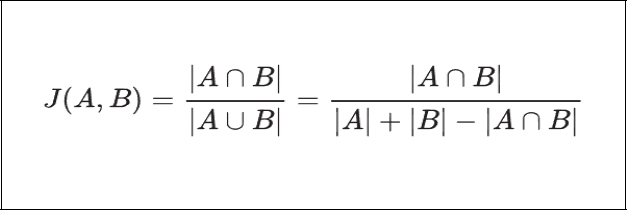

Initially, input prompts were created for DALL-E and Midjourney, and image generation was instructed based on these prompts. For both DALL-E and Midjourney, a single prompt generated four images simultaneously. The participants selected the image that best represented the input sentence and showed minimal distortion. The selected images were then uploaded to each participant and converted back into prompts. The generated results are compared in Fig. 7.

4.2 Prompt Agreement Based on Jaccard Index

The baseline input prompt was input into two AI tools, and images were generated using the text-to-image function. The images were then input into two AI tools, and the image-to-text function was used to generate prompts.

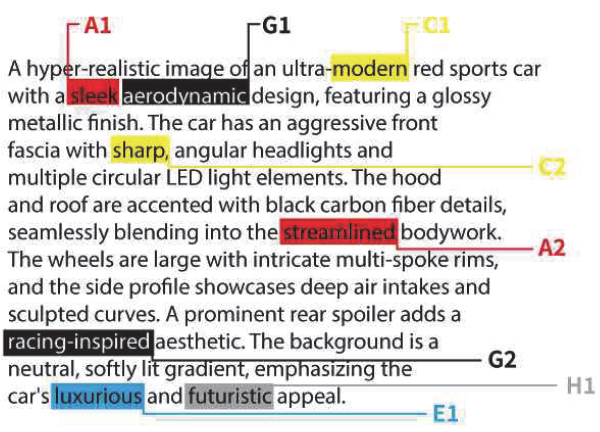

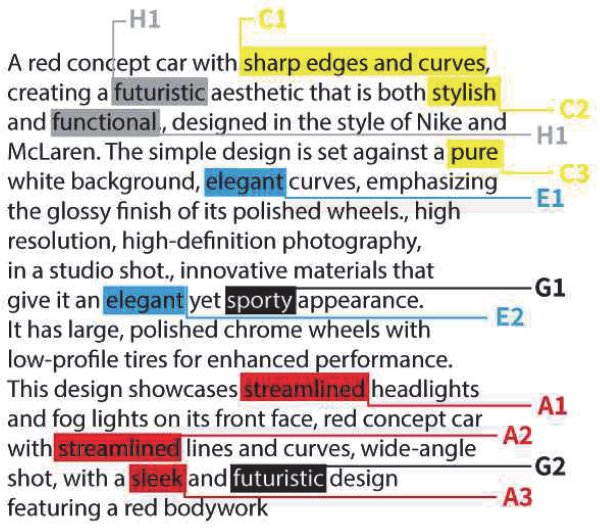

The results of replacing the three prompts with emotional vocabulary groups according to Table 2, summarized above, are represented in Figs. 8 ~ 10, respectively. Group A was assigned red, Group B orange, Group C yellow, Group D green, Group E blue, Group F purple, Group G black, and Group H gray. When substituting words, duplicate words were excluded, and words within the same group were designated by sequentially appending numbers, such as A1, A2, A3, etc.

The Jaccard index was calculated by comparing the input prompts and the prompts generated by each AI tool with the original text. The Jaccard index is the intersection of two sets divided by their union, which has a value between 0 and 1. The equations for calculating the Jaccard index are displayed in Figs. 11 and 12, respectively.

Based on this, DALL-E obtained a value of 0.6 when compared to the original text, and Midjourney obtained a value of 0.467 (rounded to the fourth decimal place), as specified in Table 3.

4.3 Interviews with stakeholders

Interviews were conducted with 12 second-year industrial design students specializing in transportation design to analyze the experimental results from a human perspective.

Considering the difficulty of mathematically analyzing the similarity between two texts, we indirectly compared the prompts written by researchers and the prompts converted by AI, which showed that the AI prompts are closer to the images generated by generative artificial intelligence.

Before the interview, the participants were briefed on the experiment flow. They were shown input prompts written by the researcher and informed that two generative AI tools were used to generate images based on these input prompts. The names of the software used in this process were not disclosed to avoid any biases that the subjects might have based on their previous experience using the selected tools.

After showing the images generated by the first AI tool (DALL-E), the participants were asked to read the text converted back into the original text using the same AI tool. They were then instructed to evaluate which of the input prompts and the prompts extracted from DALL-E had the highest relation to the previously viewed image. In addition, using the same process, they were asked which of the texts converted by Midjourney or the original text best described the image generated by Midjourney. The results of all 12 college students’ responses are summarized in Table 4.

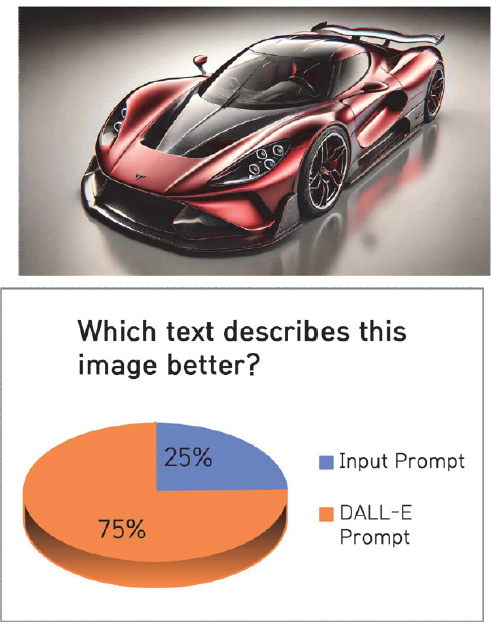

Nine out of twelve respondents reported that the prompts re-extracted from DALL-E more accurately represented the images generated by DALL-E than the original input prompts. For the images generated by Midjourney, seven out of twelve respondents judged that the text reinterpreted by Midjourney better matched the images than the original text. When the results were presented, 75% of respondents selected the text regenerated by DALL-E, while 58% selected the text generated by Midjourney (Figures 13 and 14) (Figs. 13 and 14).

4.4 Results Analysis

After comparing the two results using the Jaccard index, DALL-E (0.6) exhibited a higher value than Midjourney (0.467), suggesting that DALL-E produced results that are relatively closer to user intent than Midjourney.

Student interviews demonstrated a 9:7 preference for DALL-E, confirming its superiority in expressive clarity.

In both quantitative and qualitative evaluations, DALL-E outperformed Midjourney. The Jaccard index indicated that the former had a 28.48 % higher text match rate (rounded to three decimal places) and a 28.58 % higher preference rate in the human preference survey (rounded to three decimal places) than the latter. Because the quantitative analysis (Jaccard index) and qualitative analysis (interview evaluation) aligned, DALL-E can be interpreted as better reflecting user intent as a prompt-based generative AI. Conversely, Midjourney can be interpreted as being relatively more likely to expand designers’ ideas in unexpected directions.

The experimental results of the two AIs can be summarized as follows: DALL-E can express input prompts very specifically and quantitatively accurately reflect the user's original intent. Midjourney can generate unexpected images even with somewhat vague prompts, providing visual inspiration for users in the early ideation stages. This study provides valuable insights into the detailed strengths of each program, which can contribute to improving the efficiency of visualization in the automotive design process.

5. Conclusion

This study analyzed the agreement and preferences between human commands and generated images to determine whether generative AI, specifically DALL-E and Midjourney, are suitable for transportation vehicle design. The experimental results revealed that DALL-E generated images more accurately than Midjourney, in line with the user's intended direction. However, several limitations were identified:

- 1) The emotional vocabulary collection and grouping process was conducted in Korean, but the final translation process for inputting the English prompts may not have fully reflected the meaning of the words.

- 2) While the study's prompt was set for a sports car, the results may differ if the user were asked to design other transportation vehicles.

- 3) The Jaccard index may vary when the user's understanding of emotional vocabulary and prompts is high.

- 4) In interviews with stakeholders, the respondents’ understanding of transportation vehicle design and their experience may have led to different responses.

Therefore, in follow-up studies, it seems possible to supplement the experiment by ① dividing users into multiple groups according to their native language and re-evaluating the Jaccard index, ② describing other transportation vehicles (e.g., SUVs, buses, airplanes, and boats) other than sports cars as prompts and re-evaluating the Jaccard index, ③ dividing users into multiple groups according to their proficiency in AI tools and re-evaluating the Jaccard index, and ④ interviewing working automotive designers rather than students.

Hence, we propose ways to utilize each tool in each stage of the design process, according to its characteristics. During the initial idea generation and concept-setting stages, Midjourney can be advantageous, as it provides a variety of visual inspirations even when prompts are broad, facilitating the generation of new ideas. During the design concretization stage, DALL-E would be more effective for clearly visualizing detailed design intent. Above all, based on the characteristics of each tool revealed in the study's core results, we anticipate that employing a strategy that utilizes various AI tools simultaneously according to specific stages and goals of the design process will enhance designers’ work efficiency in the automotive design process.

This study analyzed the performance and usability of DALL-E and Midjourney in automotive design and proposed criteria for selecting appropriate tools for each design stage and purpose. Amidst the rapid advancement of AI technology, this study's significance lies in providing practical guidelines to help designers strategically utilize tools appropriate to the situation. Thus, we plan to perform additional experiments to address the limitations of the current study, as well as follow-up research aimed at building a practical design process model that integrates various AI tools.

Acknowledgments

This work was supported by the 2025 Hongik University Innovation Support Program Fund.

References

- Car Design News Interview, Retrieved from https://www.cardesignnews.com/video-and-audio/we-want-to-democratise-what-it-means-to-build-physical-products-in-the-real-world/45346.article

- AI in Art Statistics 2024, Retrieved from https://www.aiprm.com/ai-art-statistics/?utm_source=chatgpt.com

- J. Bang and H. Kim, “Analysis of Impact of Generative Artificial Intelligence Technology on Product Design Process,” KSDS SDC Fall International Conference, pp.46–51, 2023.

-

E. H. Chung and J. M. Choi, “Directions for AI-based Tools to support Designers’ Work Process,” Archives of Design Research. Vol.35, No.4, pp.269–282, 2022.

[https://doi.org/10.15187/adr.2022.11.35.4.269]

- Hyundai AIRS Company, Retrieved from https://www.hyundai.co.kr/story/CONT0000000000002512

- A. Felgan, Retrieved from https://www.audi-mediacenter.com/en/press-releases/reinventing-the-wheel-felgan-inspires-new-rimdesigns-with-ai-15097

-

L. Chen, Q. Jing, Y. Tsang, Q. Wang, R. Liu, D. Xia, Y. Zhou and L. Sun, “AutoSpark: Supporting Automobile Appearance Design Ideation with Kansei Engineering and Generative AI,” Proceedings of the 37th Annual ACM Symposium on User Interface Software and Technology, pp.1–19, 2024.

[https://doi.org/10.1145/3654777.3676337]

-

Y. Hwang, “The Usage of Generative AI in Poster Design. Archives of Design Research,” Vol.36, No.4, pp.291–308, 2023.

[https://doi.org/10.15187/adr.2023.11.36.4.291]

-

H. Na, “A Study on the Use of Generative AI Prompts Based on the Product Design Concept: Focusing on Generating an Image of a Home Superautomatic Espresso Machine at Using Midjourney,” Journal of Cultural Product and Design, No.76, pp.191–202, 2024.

[https://doi.org/10.18555/kicpd.2024.76.017]

- L. T. Cheng and S. H. Kim, “A Study on the Spatial Design Process Applying AI-Generated Content (AIGC) Technology,” Journal of Korea Institute of Spatial Design,” Vol.19, No.3, pp.533–544, 2024.

-

D. H. Lee and S. H. Ko, “Experiment and Evaluation of Architectural Image Generation through Artificial Intelligence-Based Text Image Generation Tool,” KIEAE Journal, Vol.23, No.5, pp.13–22, 2023.

[https://doi.org/10.12813/kieae.2023.23.5.013]

-

M. G. Helander, H. Peng and H. M. Khalid, “Citarasa Engineering Model for Affective Design of Vehicles,” IEEE International Conference on Industrial Engineering and Engineering Management, pp.1282–1286, 2007.

[https://doi.org/10.1109/IEEM.2007.4419399]

- J. Chae, Y. -S. Jang and H. Kim. “A Study on Extracting Representative Emotional Words for Analyzing Preference Factors in Product Design; Focused on Shape, Color and Texture,” Journal of Industrial Design Studies, Vol.10, No.4, pp.31–38, 2016.

- Y. B. Lim and K. Nah, “A Study on the Meaning and Vocabularies about Feelings of Luxury in Design,” Journal of the Korean Society of Design Culture, Vol.21, No.2, pp.575–588, 2015.